I recently picked up an IP camera to play around with and build an app that displays the camera’s live video stream. I chose the D-Link DCS-942L camera because it supports streaming video to a UDP socket by leveraging the RTSP and RTP protocols, and the consumer reviews indicated that it was easy to set up and use. The camera supports two video encodings, H.264 and MPEG4, both of which are supported by iOS (to a certain extent; more on that later).

I wanted to build an iOS app that worked with the IP camera, but ended up building an Android app instead. I’ll explain why later. There already are iOS and Android apps available for viewing video from the DCS-942L but I thought it would be fun to build my own and add a few custom features.

Background

In case you are not familiar with the basics of video streaming, here’s a brief overview of the terms I mentioned above. When I started this pet project I didn’t know much about these things, but that’s what pet projects are good for!

UDP – User Datagram Protocol is a transport protocol that can deliver “datagrams” (data with some networking metadata attached to it) from one computer to another. The data in a datagram might be just a small chunk of the full data object being transferred between two computers, so each datagram has a sequence number that aids in properly reconstructing the original data object. Unlike TCP, the UDP does not require a connection to be established and kept alive between two computers. One computer informs another computer of its IP address and the port on which it is expecting to receive datagrams, and as long as a socket is listening on that port the datagrams can keep flowing in. Another difference between UDP and TCP is that the former does not make any guarantees that datagrams will arrive in the correct order (or arrive at all). It is the application developer’s job to deal with reassembling the data and accommodating any missing datagrams. UDP is commonly used for applications that do not benefit from the strong delivery guarantees of TCP, such as video streaming where dropping an occasional video frame or two is not a noticeable problem.

RTSP – Real-Time Streaming Protocol is like HTTP but it is built specifically for setting up, configuring, and tearing down streaming media sessions, such as a video chat session or a movie being streamed to a computer from streaming media server. RTSP is not involved with the actual video streaming, but just managing the streaming session. It has a set of directives like DESCRIBE, which asks the streaming media source to list the available media types and their capabilities, and SETUP, which is how the client app tells the server which media type it wants to consume and gets a session ID back in the response.

RTP – Real-time Transport Protocol is the low-level protocol used to break a video into packets that can be streamed between computers. Those packets of video data are often put into UDP datagrams. To get a feel for the kind of processing involved with RTP video streams, check out this high-level overview of the process on StackOverflow.

H.264 and MJPEG – These are commonly used compression techniques for audio/video files. Most normal humans do not ever need to know or care about how such things work or can be decoded, since companies like Apple build support for them into their products. iOS uses hardware acceleration to decode H.264 video (by processing it on the GPU instead of the CPU). I’m not sure if they hardware accelerate MPEG4 but I assume they do. For a taste of what’s involved with decoding H.264 formatted for RTP, check out RFC 6184.

There are myriad other protocols and formats and terribly geeky lingo involved with the strange world of streaming media, but the ones above are supported by my D-Link DCS942L IP camera and are thus the ones that I care about in this project.

So close, yet so far…

It was a lot of fun writing an RTSP protocol processor in C++ and getting it to communicate with my IP camera. My code was able to dance the RTSP dance over a TCP connection and initiate a video streaming session on a port with a UDP socket ready to get busy. All I needed to do next was find a way to make iOS decode the H.264 or MPEG4 video stream and show it. Should be easy, right?

Not right. Not even close to right. I should not have left that out of my technical research before deciding to drop $100 on an IP camera that I don’t actually need. The only form of video streaming that iOS supports is some oddball called HTTP Live Streaming invented by, you guessed it, Apple. I searched the InterWebs high and low for a way to leverage the existing support in iOS for showing H.264 or MP4 videos, which works great if the videos are saved as a file in local storage, but apparently Apple locked that down pretty tight to make sure you can’t do what I wanted to do. Namely, display those videos from a stream sent to a UDP socket, using industry-standard protocols.

I should point out that there is a way to implement this in iOS by using an open-source library called FFmpeg. After reading several rants about what a headache it is to set that library up and use it, I asked a buddy of mine about it who has experience using FFmpeg. He confirmed that it’s a massive pain to use, so I dropped that plan.

Android to the rescue (?!)

I own a relatively new Nexus 4 phone that I use when learning about Android programming to test out my code on a real device. It turns out that as of API level 12, Android has had support for showing a video stream sent via RTP. It even handles all of the RTSP communication for you! Wow, take that Apple.

After about half an hour of Web searching and coding I put together an Android app (running on Android 4.3) that shows the video stream from my IP camera. That was easy.

Normally this blog is about iOS development, but since Apple really let me down this time, my blog will have a cameo appearance by that green Android robot.

Your project needs to support at least API level 12, since that is when Google added support for RTP video streaming, but my project’s minimum SDK version is 18. To allow your app to use the video streaming feature put these in the AndroidManifest.xml file:

<!-- Required permissions for RTP video streaming. --> <uses-permission android:name="android.permission.INTERNET" /> <uses-permission android:name="android.permission.RECORD_AUDIO" />

Next we need to create the UI element that will display video being streamed from an IP camera. Open your activity’s layout file and add a SurfaceView element, like so:

<RelativeLayout

xmlns:android="http://schemas.android.com/apk/res/android"

android:layout_width="match_parent"

android:layout_height="match_parent">

<SurfaceView

android:id="@+id/surfaceView"

android:layout_width="fill_parent"

android:layout_height="fill_parent" />

</RelativeLayout>

The rest of the implementation is in the activity subclass that displays the layout created above. Creating the activity’s UI and configuring it to be full-screen is shown below. If you use this code, be sure to update the RTSP_URL string to your camera’s local IP address.

public

class MainActivity

extends Activity

implements MediaPlayer.OnPreparedListener,

SurfaceHolder.Callback {

final static String USERNAME = "admin";

final static String PASSWORD = "camera";

final static String RTSP_URL = "rtsp://10.0.1.7:554/play1.sdp";

private MediaPlayer _mediaPlayer;

private SurfaceHolder _surfaceHolder;

@Override

protected void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

// Set up a full-screen black window.

requestWindowFeature(Window.FEATURE_NO_TITLE);

Window window = getWindow();

window.setFlags(

WindowManager.LayoutParams.FLAG_FULLSCREEN,

WindowManager.LayoutParams.FLAG_FULLSCREEN);

window.setBackgroundDrawableResource(android.R.color.black);

setContentView(R.layout.activity_main);

// Configure the view that renders live video.

SurfaceView surfaceView =

(SurfaceView) findViewById(R.id.surfaceView);

_surfaceHolder = surfaceView.getHolder();

_surfaceHolder.addCallback(this);

_surfaceHolder.setFixedSize(320, 240);

}

// More to come...

}

Note that the SurfaceHolder object is given the activity as a callback. This enables the activity to know when the rendering surface is ready for use. When it is ready we begin setting up the MediaPlayer object that handles the RTSP communication and RTP video streaming work. When the SurfaceView is destroyed, perhaps due to the device orientation being changed or the app terminating, the media player is disposed of via the release method.

/*

SurfaceHolder.Callback

*/

@Override

public void surfaceChanged(

SurfaceHolder sh, int f, int w, int h) {}

@Override

public void surfaceCreated(SurfaceHolder sh) {

_mediaPlayer = new MediaPlayer();

_mediaPlayer.setDisplay(_surfaceHolder);

Context context = getApplicationContext();

Map<String, String> headers = getRtspHeaders();

Uri source = Uri.parse(RTSP_URL);

try {

// Specify the IP camera's URL and auth headers.

_mediaPlayer.setDataSource(context, source, headers);

// Begin the process of setting up a video stream.

_mediaPlayer.setOnPreparedListener(this);

_mediaPlayer.prepareAsync();

}

catch (Exception e) {}

}

@Override

public void surfaceDestroyed(SurfaceHolder sh) {

_mediaPlayer.release();

}

The IP camera that I own requires all RTSP requests to contain an ‘Authorization’ header with a Basic auth value, which is a very insecure way of passing my ever-so-confidential username and password for the camera (which I typed in when using the camera’s setup wizard software). The code above uses a helper method to get the headers needed to communicate with the RTSP server, in this case just an Authorization header. Here’s how I implemented that.

private Map<String, String> getRtspHeaders() {

Map<String, String> headers = new HashMap<String, String>();

String basicAuthValue = getBasicAuthValue(USERNAME, PASSWORD);

headers.put("Authorization", basicAuthValue);

return headers;

}

private String getBasicAuthValue(String usr, String pwd) {

String credentials = usr + ":" + pwd;

int flags = Base64.URL_SAFE | Base64.NO_WRAP;

byte[] bytes = credentials.getBytes();

return "Basic " + Base64.encodeToString(bytes, flags);

}

Last but not least we have the “on prepared” listener implementation for the MediaPlayer object. This is invoked some time after the call to prepareAsync seen previously. All that it needs to do is start the media playback.

/*

MediaPlayer.OnPreparedListener

*/

@Override

public void onPrepared(MediaPlayer mp) {

_mediaPlayer.start();

}

A few seconds after that code executes I begin to see the video stream displayed on my Android phone. Very cool!

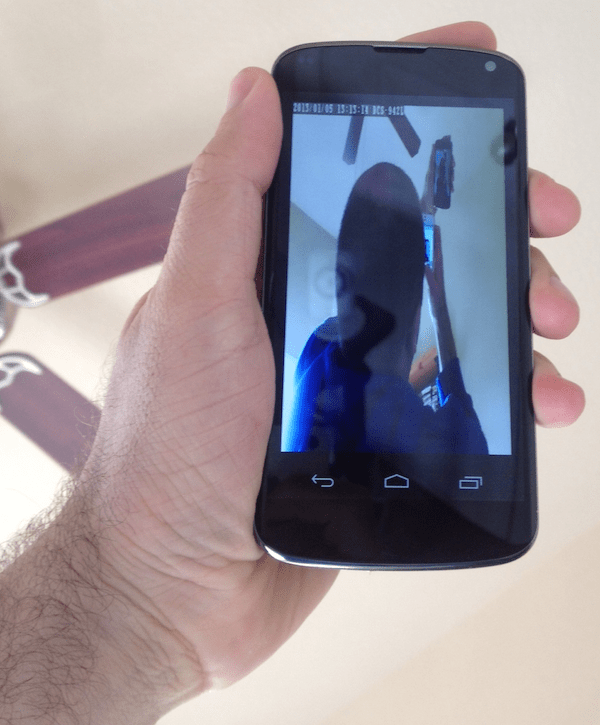

Recursive Selfies

Here is a shot of my IP camera and my Android phone, which is showing the video being streamed from the camera.

When several cameras are being used at the same time, you can start getting into some weird recursive situations, such as this picture of me taking a picture of myself taking a picture…

If you really want to get freaky with it, view the camera’s internal Web site to have a large screen full of streaming video and then hold the Android phone up next to it. This starts to get pretty confusing after a while!

Well, I hope you learned a thing or two out of all this. I know that I did. Happy programming!

Kindly share with me the code ,if not possible then kindly share the apk file

Kindly send the aap in email

ubeterknowme@gmail.com

very good your project, would provide the code. email: iogo_114@hotmail.com

can you share with me your full code to idrisyildiz7@gmail.com

ı try above code but ı have that error

Mediaserver died in 16 state

my full code java

import java.util.HashMap;

import java.util.Map;

import com.example.smarthomedemo.R;

import android.app.Activity;

import android.content.Context;

import android.content.Intent;

import android.media.AudioManager;

import android.media.MediaPlayer;

import android.net.Uri;

import android.os.Bundle;

import android.util.Base64;

import android.util.Log;

import android.view.KeyEvent;

import android.view.SurfaceHolder;

import android.view.SurfaceView;

import android.view.Window;

import android.view.WindowManager;

public class IpCam extends Activity implements MediaPlayer.OnPreparedListener,

SurfaceHolder.Callback

{

final static String USERNAME = “admin”;

final static String PASSWORD = “admin”;

final static String RTSP_URL = “rtsp://192.168.2.237:554/video.mp4”;

private MediaPlayer _mediaPlayer;

private SurfaceHolder _surfaceHolder;

@Override

protected void onCreate(Bundle savedInstanceState) {

super.onCreate(savedInstanceState);

// Set up a full-screen black window.

requestWindowFeature(Window.FEATURE_NO_TITLE);

Window window = getWindow();

window.setFlags(WindowManager.LayoutParams.FLAG_FULLSCREEN,

WindowManager.LayoutParams.FLAG_FULLSCREEN);

window.setBackgroundDrawableResource(android.R.color.black);

setContentView(R.layout.activity_ip_cam);

// Configure the view that renders live video.

SurfaceView surfaceView = (SurfaceView) findViewById(R.id.surfaceView);

_surfaceHolder = surfaceView.getHolder();

_surfaceHolder.addCallback(this);

_surfaceHolder.setFixedSize(320, 240);

}

@Override

public void surfaceChanged(SurfaceHolder sh, int f, int w, int h)

{

}

@Override

public void surfaceCreated(SurfaceHolder sh)

{

_mediaPlayer = new MediaPlayer();

_mediaPlayer.setDisplay(_surfaceHolder);

Context context = getApplicationContext();

Map headers = getRtspHeaders();

Uri source = Uri.parse(RTSP_URL);

try {

// Specify the IP camera’s URL and auth headers.

_mediaPlayer.setDataSource(context, source, headers);

// Begin the process of setting up a video stream.

_mediaPlayer.setOnPreparedListener(this);

_mediaPlayer.setOnPreparedListener(new MediaPlayer.OnPreparedListener()

{

@Override

public void onPrepared(MediaPlayer mp)

{

mp.start();

}

});

_mediaPlayer.prepareAsync();

}

catch (Exception e)

{

}

}

@Override

public void surfaceDestroyed(SurfaceHolder sh)

{

_mediaPlayer.release();

}

private Map getRtspHeaders()

{

Map headers = new HashMap();

String basicAuthValue = getBasicAuthValue(USERNAME, PASSWORD);

headers.put(“Authorization”, basicAuthValue);

return headers;

}

private String getBasicAuthValue(String usr, String pwd)

{

String credentials = usr + “:” + pwd;

int flags = Base64.URL_SAFE | Base64.NO_WRAP;

byte[] bytes = credentials.getBytes();

return “Basic ” + Base64.encodeToString(bytes, flags);

}

@Override

public void onPrepared(MediaPlayer mp)

{

mp.start();

}

@Override

public boolean onKeyDown(int keyCode, KeyEvent event)

{

// pass to if back is pressed

if (keyCode == KeyEvent.KEYCODE_BACK)

{

Intent myIntent = new Intent(IpCam.this, SecurityActivity.class);

startActivityForResult(myIntent, 0);

finish();

return true;

}

return super.onKeyDown(keyCode, event);

}

}

.xml

plesae help me

Hi any one please send Real-Time Streaming Protocol full source code. Above that code i worked but it’s not working. please send me my mail id is temachandru@gmail.com

Hi

I look like my android application language.

I submit to you, if possible please send me an email.

Thanks

MY JAVA CODE

import java.util.HashMap; import java.util.Map;

import com.example.smarthomedemo.R;

import android.app.Activity; import android.content.Context; import android.content.Intent; import android.media.AudioManager; import android.media.MediaPlayer; import android.net.Uri; import android.os.Bundle; import android.util.Base64; import android.util.Log; import android.view.KeyEvent; import android.view.SurfaceHolder; import android.view.SurfaceView; import android.view.Window; import android.view.WindowManager;

public class IpCam extends Activity implements MediaPlayer.OnPreparedListener, SurfaceHolder.Callback

{ final static String USERNAME = “admin”; final static String PASSWORD = “admin”; final static String RTSP_URL = “rtsp://192.168.2.237:554/video.mp4″; private MediaPlayer _mediaPlayer; private SurfaceHolder _surfaceHolder;

@Override protected void onCreate(Bundle savedInstanceState) { super.onCreate(savedInstanceState);

// Set up a full-screen black window. requestWindowFeature(Window.FEATURE_NO_TITLE); Window window = getWindow(); window.setFlags(WindowManager.LayoutParams.FLAG_FULLSCREEN, WindowManager.LayoutParams.FLAG_FULLSCREEN); window.setBackgroundDrawableResource(android.R.color.black); setContentView(R.layout.activity_ip_cam); // Configure the view that renders live video. SurfaceView surfaceView = (SurfaceView) findViewById(R.id.surfaceView); _surfaceHolder = surfaceView.getHolder(); _surfaceHolder.addCallback(this); _surfaceHolder.setFixedSize(320, 240); } @Override public void surfaceChanged(SurfaceHolder sh, int f, int w, int h) {

} @Override public void surfaceCreated(SurfaceHolder sh) { _mediaPlayer = new MediaPlayer(); _mediaPlayer.setDisplay(_surfaceHolder);

Context context = getApplicationContext(); Map headers = getRtspHeaders(); Uri source = Uri.parse(RTSP_URL);

try { // Specify the IP camera’s URL and auth headers. _mediaPlayer.setDataSource(context, source, headers);

// Begin the process of setting up a video stream. _mediaPlayer.setOnPreparedListener(this);

_mediaPlayer.setOnPreparedListener(new MediaPlayer.OnPreparedListener() { @Override public void onPrepared(MediaPlayer mp) { mp.start(); } }); _mediaPlayer.prepareAsync();

} catch (Exception e) { } }

@Override public void surfaceDestroyed(SurfaceHolder sh) { _mediaPlayer.release(); } private Map getRtspHeaders() { Map headers = new HashMap(); String basicAuthValue = getBasicAuthValue(USERNAME, PASSWORD); headers.put(“Authorization”, basicAuthValue); return headers; } private String getBasicAuthValue(String usr, String pwd) { String credentials = usr + “:” + pwd; int flags = Base64.URL_SAFE | Base64.NO_WRAP; byte[] bytes = credentials.getBytes(); return “Basic ” + Base64.encodeToString(bytes, flags); } @Override public void onPrepared(MediaPlayer mp) { mp.start(); } @Override public boolean onKeyDown(int keyCode, KeyEvent event) { // pass to if back is pressed if (keyCode == KeyEvent.KEYCODE_BACK) { Intent myIntent = new Intent(IpCam.this, SecurityActivity.class); startActivityForResult(myIntent, 0); finish();

return true; } return super.onKeyDown(keyCode, event); } }

my xml code

And ı am useing TP-Link ip camera Model: SC3230 and SC4171

logCat: Mediaserver died in 16 state

2014-03-26 8:22 GMT+02:00 iJoshSmith :

> milad commented: “Hi I look like my android application language. I > submit to you, if possible please send me an email. Thanks” >

And how can ı use that code withoutusername and password maybe my ip camera dont have username and password youknow?

2014-03-26 16:33 GMT+02:00 idris yıldız :

> MY JAVA CODE > > import java.util.HashMap; > import java.util.Map; > > import com.example.smarthomedemo.R; > > import android.app.Activity; > import android.content.Context; > import android.content.Intent; > import android.media.AudioManager; > import android.media.MediaPlayer; > import android.net.Uri; > import android.os.Bundle; > import android.util.Base64; > import android.util.Log; > import android.view.KeyEvent; > import android.view.SurfaceHolder; > import android.view.SurfaceView; > import android.view.Window; > import android.view.WindowManager; > > public class IpCam extends Activity implements > MediaPlayer.OnPreparedListener, > SurfaceHolder.Callback > > { > final static String USERNAME = “admin”; > final static String PASSWORD = “admin”; > final static String RTSP_URL = “rtsp://192.168.2.237:554/video.mp4″; > private MediaPlayer _mediaPlayer; > private SurfaceHolder _surfaceHolder; > > @Override > protected void onCreate(Bundle savedInstanceState) { > super.onCreate(savedInstanceState); > > // Set up a full-screen black window. > requestWindowFeature(Window.FEATURE_NO_TITLE); > Window window = getWindow(); > window.setFlags(WindowManager.LayoutParams.FLAG_FULLSCREEN, > WindowManager.LayoutParams.FLAG_FULLSCREEN); > window.setBackgroundDrawableResource(android.R.color.black); > setContentView(R.layout.activity_ip_cam); > // Configure the view that renders live video. > SurfaceView surfaceView = (SurfaceView) findViewById(R.id.surfaceView); > _surfaceHolder = surfaceView.getHolder(); > _surfaceHolder.addCallback(this); > _surfaceHolder.setFixedSize(320, 240); > } > @Override > public void surfaceChanged(SurfaceHolder sh, int f, int w, int h) > { > > } > @Override > public void surfaceCreated(SurfaceHolder sh) > { > _mediaPlayer = new MediaPlayer(); > _mediaPlayer.setDisplay(_surfaceHolder); > > Context context = getApplicationContext(); > Map headers = getRtspHeaders(); > Uri source = Uri.parse(RTSP_URL); > > try { > // Specify the IP camera’s URL and auth headers. > _mediaPlayer.setDataSource(context, source, headers); > > // Begin the process of setting up a video stream. > _mediaPlayer.setOnPreparedListener(this); > > _mediaPlayer.setOnPreparedListener(new MediaPlayer.OnPreparedListener() > { > @Override > public void onPrepared(MediaPlayer mp) > { > mp.start(); > } > }); > _mediaPlayer.prepareAsync(); > > } > catch (Exception e) > { > } > } > > @Override > public void surfaceDestroyed(SurfaceHolder sh) > { > _mediaPlayer.release(); > } > private Map getRtspHeaders() > { > Map headers = new HashMap(); > String basicAuthValue = getBasicAuthValue(USERNAME, PASSWORD); > headers.put(“Authorization”, basicAuthValue); > return headers; > } > private String getBasicAuthValue(String usr, String pwd) > { > String credentials = usr + “:” + pwd; > int flags = Base64.URL_SAFE | Base64.NO_WRAP; > byte[] bytes = credentials.getBytes(); > return “Basic ” + Base64.encodeToString(bytes, flags); > } > @Override > public void onPrepared(MediaPlayer mp) > { > mp.start(); > } > @Override > public boolean onKeyDown(int keyCode, KeyEvent event) > { > // pass to if back is pressed > if (keyCode == KeyEvent.KEYCODE_BACK) > { > Intent myIntent = new Intent(IpCam.this, SecurityActivity.class); > startActivityForResult(myIntent, 0); > finish(); > > return true; > } > return super.onKeyDown(keyCode, event); > } > } > > > my xml code > > xmlns:android=”http://schemas.android.com/apk/res/android” > android:layout_width=”match_parent” > android:layout_height=”match_parent”> > android:id=”@+id/surfaceView” > android:layout_width=”fill_parent” > android:layout_height=”fill_parent” /> > > > And ı am useing TP-Link ip camera Model: SC3230 and SC4171 > > logCat: Mediaserver died in 16 state > > > 2014-03-26 8:22 GMT+02:00 iJoshSmith : > > milad commented: “Hi I look like my android application language. I >> submit to you, if possible please send me an email. Thanks” >>

Hi Josh,

First of all thanks for sharing it.I also want to use dLink ip camera for video streaming in android phone.I have looked your code here but one confusion is in my mind is about RTSP_URL.Where we can find that url for DLINk Ip camera?Pl. help me for same.

Also another query is do it also stream audio?Or it is just for video?

Hi Chirag,

Did u get any answer about your questions ? I also trying to get video and audio stream on Android. If u find sth about it can you let me know how to do it, please ? I also need source code of this post to check it out. Any help would be great.

my e-mail : alfabenefer@gmail.com

Thank you.

A very great post up and just what I was looking for! If it is possible to send me the full source code? Thanks!

Has anybody successfully used this code? I am having problems with it.

if you have run this code send me to pravin2628@gmail.com.

if you have run this code send me to iqbalsaycho@gmail.com

Kindly send the aap in email: rafamede4490@gmail.com

i use TRENDnet TV-110 , my code program not error but when run this program, that camera not display, please help me, reply in my email : brilianta.fs@gmail.com

this is my code :

package tugasakhir.camera2;

import java.util.HashMap;

import java.util.Map;

import android.os.Bundle;

import android.app.Activity;

import android.view.Menu;

import android.app.Activity;

import android.content.Context;

import android.content.Intent;

import android.media.AudioManager;

import android.media.MediaPlayer;

import android.net.Uri;

import android.os.Bundle;

import android.util.Base64;

import android.util.Log;

import android.view.KeyEvent;

import android.view.SurfaceHolder;

import android.view.SurfaceView;

import android.view.Window;

import android.view.WindowManager;

public class MainActivity extends Activity

implements MediaPlayer.OnPreparedListener,

SurfaceHolder.Callback

{

final static String USERNAME = “admin”;

final static String PASSWORD = “admin”;

final static String RTSP_URL = “rtsp://192.168.1.100/goform/video2”;

private MediaPlayer _mediaPlayer;

private SurfaceHolder _surfaceHolder;

@Override

protected void onCreate(Bundle savedInstanceState)

{

super.onCreate(savedInstanceState);

// Set up a full-screen black window.

requestWindowFeature(Window.FEATURE_NO_TITLE);

Window window = getWindow();

window.setFlags(

WindowManager.LayoutParams.FLAG_FULLSCREEN,

WindowManager.LayoutParams.FLAG_FULLSCREEN);

window.setBackgroundDrawableResource(android.R.color.black);

setContentView(R.layout.activity_main);

// Configure the view that renders live video.

SurfaceView surfaceView =

(SurfaceView) findViewById(R.id.surfaceView);

_surfaceHolder = surfaceView.getHolder();

_surfaceHolder.addCallback(this);

_surfaceHolder.setFixedSize(320, 240);

}

// More to come…

/*

SurfaceHolder.Callback

*/

@Override

public void surfaceChanged(

SurfaceHolder sh, int f, int w, int h) {}

@Override

public void surfaceCreated(SurfaceHolder sh) {

_mediaPlayer = new MediaPlayer();

_mediaPlayer.setDisplay(_surfaceHolder);

Context context = getApplicationContext();

Map headers = getRtspHeaders();

Uri source = Uri.parse(RTSP_URL);

try {

// Specify the IP camera’s URL and auth headers.

_mediaPlayer.setDataSource(context, source, headers);

// Begin the process of setting up a video stream.

_mediaPlayer.setOnPreparedListener(this);

_mediaPlayer.prepareAsync();

}

catch (Exception e) {}

}

@Override

public void surfaceDestroyed(SurfaceHolder sh) {

_mediaPlayer.release();

}

private Map getRtspHeaders() {

Map headers = new HashMap();

String basicAuthValue = getBasicAuthValue(USERNAME, PASSWORD);

headers.put(“Authorization”, basicAuthValue);

return headers;

}

private String getBasicAuthValue(String usr, String pwd) {

String credentials = usr + “:” + pwd;

int flags = Base64.URL_SAFE | Base64.NO_WRAP;

byte[] bytes = credentials.getBytes();

return “Basic ” + Base64.encodeToString(bytes, flags);

}

/*

MediaPlayer.OnPreparedListener

*/

@Override

public void onPrepared(MediaPlayer mp) {

_mediaPlayer.start();

}

}

Thank you for this post. May I too have access to the full source code, please? Thank you!

i want to do same thing with video streaming and playing to my android mobile using ip,port,peerid and fileid if you have any idea about it plz share on md.hussain.jh@gmail.com

Thank you in advance……

Thanks for the post.

I would like to ask a copy of full source code.

That would be great to view my ipcam.

Can email me to kopihao@gmail.com?

Thanks again.

Sir i need your help please i want your full source code sir can you i email me to sirc182@gmail.com

Thanks Sir

can you share with me your full code to prawit1135@gmail.com

ı try above code but ı have that error

thank you very much

Thanks for you tutorial. Can you share with me your full code to henry4343@gmail.com

Thank you very much..

Can you share with me your full code to trinhphongqn@gmail.com

thank you very much.

it looks greate. I work for something similar: I need to pass video from one device to another. I send the video packets over udp from one device to another, using h264. I dont manage to decode it and display it on the device. I see that you used here just the url source and it is beeing displayed. Can this help me? or any other idea how can I do this?

Can you give me the explanation about RTSP protocol usage code in Live streaming.For the Android Application ,how to do the live streaming.Please send me the link or code this email id

jeya.prakas91@gmail.com

Can you give me the android code for RTSP protocol usage code in Live streaming..Please send me the link or code this email id

jeya.prakas13@gmail.com

Hi can you share the code, Thanks, robehughes@gmail.com

Ongoing projects on Android, this time on a streaming server was just looking for a way to transfer. This source will be helpful to include me. Thank you sharing.

My email address is bemovestills@gmail.com

sir can you send your full code to my email miguel1_1300@yahoo.com. Thanks

Thanks to your source code, It was helped for me and i got a hint

Thank you so very much.。

임수창 can you send your full code to my email idrisyildiz7@gmail.com Thanks

Hi Josh,

Firstly thank you so much for this useful post. Is it possible to get full source code of this project if u don’t mind ? It would be great help for my project. I’m looking forward to check it out.

my e-mail : alfabenefer@gmail.com

Thank you!

Thanks for your great tutorial. Can you share your full code with me via email,here is my email address

i.parmis@ymail.com

Thank you very much…

Thanks for your tutorial. Can you share your code with me ?

gerlanfa@yahoo.com

Thank you very much…

Thanks for your great tutorial. It very helpful for my project.

Can you share your full code with me via email : lamchiphuong@gmail.com

Thank you very much.

Thanks a lot for your tutorial ijoshsmith. Can anyone send project code please?

My mail:ylmzekrm@gmail.com

send code to me… plz!! ro_d18@naver.com

Hi.

I almost did it but after doing the copy paste and the imports still get an error: cannot instantiate the type MediaPlayer.

Can anyone help me? Is there anyone with the project code that does not mind losing 5 min sending me?

piresmoreira.ensino@gmail.com

can you Please send me the source code.

kedar.kotkunde@gmail.com